- Home /

- Research /

- Reports & Analysis /

- What’s the Strategy of Russia’s Internet Trolls? We Analyzed Their Tweets to Find Out.

What’s the Strategy of Russia’s Internet Trolls? We Analyzed Their Tweets to Find Out.

We find that IRA-operated Twitter accounts shared less junk news than one might have expected — relying instead on local news sources.

Credit: Pexels

Authors

- Franziska Roscher,

- Leon Yin,

- Richard Bonneau,

- Jonathan Nagler,

- Joshua A. Tucker

Tags

This article was originally published at The Washington Post.

As U.S. citizens cast their ballots in this month’s midterm election, Facebook announced its suspicions that the Russia-based Internet Research Agency (IRA) was attempting to interfere. But what, exactly, was the online strategy of this “troll farm” that special counsel Robert S. Mueller III had already indicted in connection with meddling in the 2016 U.S. presidential campaign?

To understand the IRA’s approach, we looked at what it had done before the 2016 election. We analyzed all known IRA-operated Twitter accounts to see what kind of material the trolls shared. We found that they shared less junk news than one might have expected, relying instead on local news sources.

How we did our research

Our study drew on a data set shared online by Twitter’s elections integrity initiative of more than 9 million tweets sent by about 3,600 IRA-linked accounts in 2016. Because we were primarily interested in tweets with links that had been shared in the United States, we discarded tweets without links and accounts that posted in Russian. That left us with 556 accounts that tweeted about 209,000 links between January and November.

To compare the trolls’ tweeting to “normal” Twitter behavior, we also collected tweets during the same period from two comparison groups: politically engaged users and a random sample of U.S. users. These tweets were collected by New York University’s Social Media and Political Participation (SMaPP) lab. The sample of random Twitter users contains 1,344 accounts that tweeted about 106,000 links; the sample of politically engaged users encompasses 1,952 accounts that shared about 437,000 URLs. Find our full analysis and additional methodological details in a newly released SMaPP Data Report.

Junk news? Not so much.

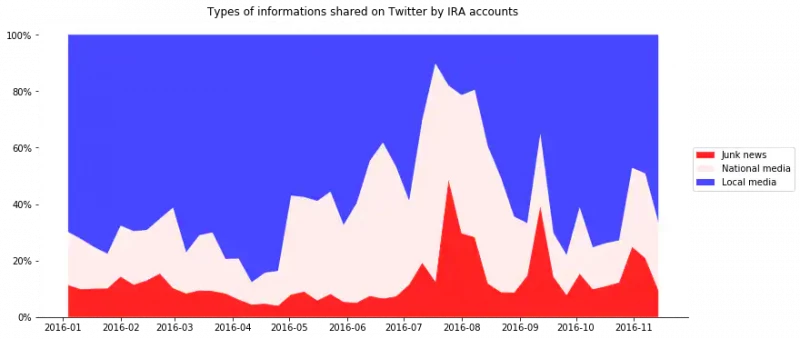

Only about 6 percent of all links the trolls shared on Twitter led to what Merrimack College researchers classified as known junk news sites; these sites contained, for example, extreme bias, conspiracy theories, rumors or junk science. Still, trolls linked to such sites more often than the average politically interested user linked to such sites (4 percent of all links) and much more than an average Twitter user (0.4 percent of links).

But the number of links to junk news sites spiked sharply in the weeks immediately leading up to the election, suggesting that the trolls put extra effort into spreading falsehoods just before Election Day. At its peak, the IRA tweeted more than 2,000 links to junk news sites in a single week in September 2016, which was five to 10 times more than in typical previous weeks.

How the IRA went local

Our most surprising finding is that the IRA heavily used local media: 30 percent of all its links led to local or regional news websites. That means the trolls shared five times as much local news content as junk news content. By comparison, our politically interested users pointed 1.9 percent of their links to local news sources and our average users only 0.3 percent.

Some IRA accounts even pretended to be local news outlets themselves. They used Twitter handles such as “Atlanta_Online” or “KansasDailyNews” and tweeted heavily about the region they pretended to be located in, sharing articles from real local news outlets. Many of their tweets talked about “Trump,” “Clinton” or “#politics,” meaning that these local news sources were at least in part used to spread news about the presidential race.

Another set of IRA accounts masqueraded as partisans on the left or the right. Drawing on classifications from Patrick L. Warren and Darren L. Linvill of Clemson University, we also examined 14 IRA accounts that pretended to be white conservative Americans — using Twitter handles such as “USA_Gunslinger” or “SouthLoneStar” — and eight posing as left-leaning, usually black, partisans, with screen names such as “BlackToLive” or “TrayneshaCole.”

These partisan accounts also shared local news, framed in polarizing ways. Although both sides often tweeted about the police, crime and minorities, the right-leaning accounts tended to focus on crimes committed by minorities and to support law enforcement, while the left-leaning accounts emphasized crimes against minorities and police brutality.

Proportion of each news category shared by the IRA over time

But why?

We suspect that the IRA relied so heavily on local news sources because they believed Americans trust their local media outlets more than other sources, as a 2017 Pew Research Center report found.

The local-tweeting trolls also could have been “sleeper accounts.” By posing as legitimate news sources and sharing reliable information for months or years, they might have been trying to build a reputation and online following for more effectiveness in future influence campaigns.

Another possibility is that the IRA simply wanted to exacerbate polarization. Amplifying reliable local news content in ways that confirm partisan prejudices could also heighten polarization. Some of the fake partisan accounts amassed hundreds of likes and retweets on every link they shared, many more than the average IRA accounts.

Does the IRA know its geography?

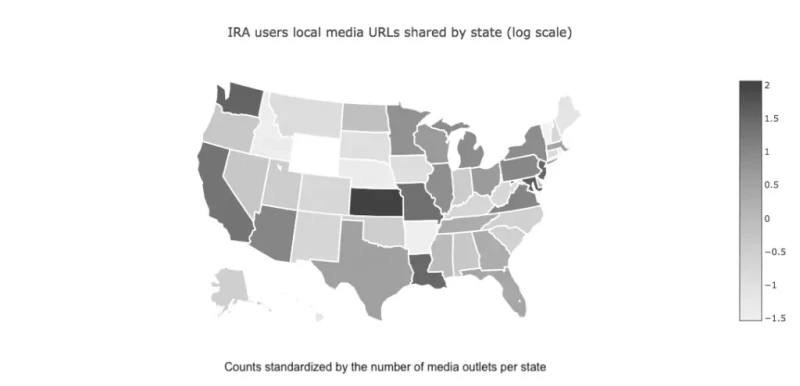

IRA accounts’ geographical targeting was less sophisticated, at least for the 2016 presidential race. The troll farm tweeted most local news content from outlets based in California, Kansas and Missouri, none of which were considered swing states in 2016.

Map of local news shared by IRA accounts

Lessons for the future

Our findings regarding junk news and local news shared by IRA trolls reveal that simply fighting fake news will not be enough to stop attempts at electoral manipulation on social media. That being said, links to news were not the only content shared by IRA trolls on Twitter, so there is more to be done to understand these accounts’ strategies.

Franziska Roscher is a PhD candidate in politics at New York University and a graduate research associate in the Social Media and Political Participation lab at New York University.

Leon Yin is a research scientist in the Social Media and Political Participation lab.

Richard Bonneau is professor of biology and computer science at NYU and a co-director of the Social Media and Political Participation lab.

Jonathan Nagler is professor of politics at NYU and a co-director of the Social Media and Political Participation lab.

This article is one in a series supported by the MacArthur Foundation Research Network on Opening Governance that seeks to work collaboratively to increase our understanding of how to design more effective and legitimate democratic institutions using new technologies and new methods. Neither the MacArthur Foundation nor the network is responsible for the article’s specific content. Other posts can be found here.